|

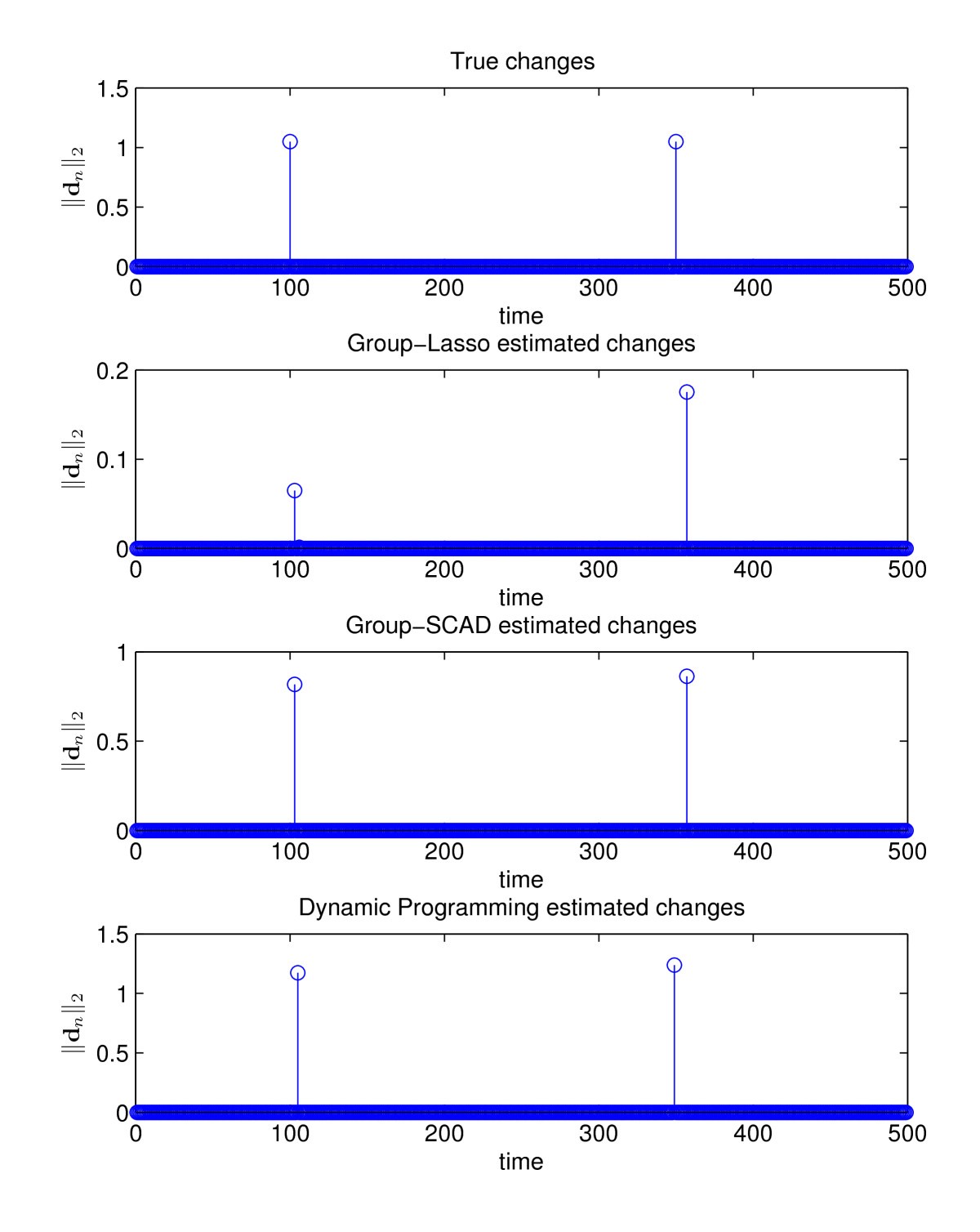

We derive precise upper bounds on the mean-squared estimation error in terms of the number of samples, dimensions of the process, the lag $p$ and other key statistical properties of the model. Rigorous analysis of such estimators is made challenging by the dependent and non-Gaussian nature of the process as well as the presence of the nonlinearities and multi-level feedback. We propose and analyze an $\ell_1$-regularized maximum likelihood (ML) estimator under the assumption that the parameter tensor is approximately sparse. We also allow the process to depend on its past up to a lag p, for a general $p \geq 1$, allowing for more realistic modeling in many applications. This problem arises in learning interconnections of networks of dynamical systems with spiking or binary valued data. We prove that given solvable Boolean equations, when the initial values of the nodes for the distributed linear equation solving step are i.i.d selected according to a uniform distribution in a high-dimensional cube, our algorithms return the exact solution set of the Boolean equations at each node with high probability.read more read lessĪbstract: We consider the problem of estimating the parameters of a multivariate Bernoulli process with auto-regressive feedback in the high-dimensional setting where the number of samples available is much less than the number of parameters. The solutions to the original Boolean equations are eventually computed locally via a Boolean vector search algorithm. A number of exact or approximate solutions to the induced linear equation are then computed at each node from different initial values. We show that each private Boolean equation can be locally lifted to a linear algebraic equation under a basis of Boolean vectors, leading to a network linear equation that is distributedly solvable. The Boolean equation assigned at any particular node is a private equation known to this node only, and the nodes aim to compute the exact set of solutions to the system without exchanging their local equations. Importantly, the bound indicates that, with sufficient sparsity, consistent estimation is possible in cases where the number of samples is significantly less than the total number of parameters.read more read lessĪbstract: In this paper, we propose distributed algorithms that solve a system of Boolean equations over a network, where each node in the network possesses only one Boolean equation from the system. Our main result provides a bound on the mean-squared error of the estimated connectivity tensor as a function of the sparsity and the number of samples, for a class of discrete multivariate AR models, in the high-dimensional regime.

However, the analysis of such methods is difficult due to the feedback in the state dynamic and the presence of nonlinearities, especially when the underlying process is non-Gaussian. For such problems, $\ell _$ -regularized maximum likelihood estimation (or M-estimation more generally) is often straightforward to apply and works well in practice.

We focus on the sparse setting, which arises in applications with a limited number of direct connections between variables. The problem is to learn the linear connectivity tensor from observations of the states.

This article considers the problem of learning a $p$ -lag multivariate AR model where each time step involves a linear combination of the past $p$ states followed by a probabilistic, possibly nonlinear, mapping to the next state. Abstract: Fitting multivariate autoregressive (AR) models is fundamental for time-series data analysis in a wide range of applications.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed